Image credit: ACM TOCHI

Image credit: ACM TOCHIAbstract

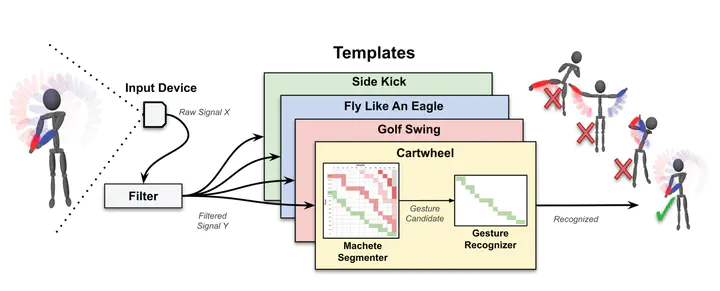

We present Machete, a straightforward segmenter one can use to isolate custom gestures in continuous input. Machete uses traditional continuous dynamic programming with a novel dissimilarity measure to align incoming data with gesture class templates in real time. Advantages of Machete over alternative techniques is that our segmenter is computationally efficient, accurate, device-agnostic, and works with a single training sample. We demonstrate Machete’s effectiveness through an extensive evaluation using four new high-activity datasets that combine puppeteering, direct manipulation, and gestures. We find that Machete outperforms three alternative techniques in segmentation accuracy and latency, making Machete the most performant segmenter. We further show that when combined with a custom gesture recognizer, Machete is the only option that achieves both high recognition accuracy and low latency in a video game application.

Short Summary

In a continuous stream of data, the starting and ending points of gestures are not known. We present Machete - an approach to identifying such points in the data, solving the segmentation problem.